A couple of weeks ago, Rand Fishkin posted a viral video to LinkedIn claiming that brands mentioned in AI-cited URLs are “correlated […] but not causal” to which brands appear in AI responses.

That is, he claimed that when an AI response cites URLs, the content of those URLs has no influence over the brands mentioned in the AI response.

If you’ve been following my research on this topic, you know this is demonstrably untrue.

When AI doesn't have enough information in its training data, it does web searches to fill gaps in its knowledge. (This is called retrieval augmented generation, or “RAG.”) It’s how ChatGPT and other AIs can answer your questions about breaking news and other topics that post-date the AI’s training data cutoff.

I don’t bring this up to shame or embarrass Rand. I believe he posted the video in good faith and has nothing but good intentions. He just fell into a logical fallacy trap that many of us are prone to when learning about a new subject.

Which makes this a good case study for addressing the biggest traps I see people falling into on LinkedIn.

Because LinkedIn is flooded with misinformation about AI search optimization — by sources both well-intentioned and self-serving — and it’s important to have frameworks for separating the signal from the noise.

So here are three logical-fallacy traps to be aware of when you’re evaluating AI-search claims on the information hellscape that is LinkedIn. And how you can avoid them.

I. The Ivory Tower Trap

The trap: Assuming that someone who has an impressive academic understanding of LLMs has special insight into how commercial AI models will behave in the real world.

Someone with an academic understanding of LLMs probably reads papers about artificial intelligence on arXiv and follows the most interesting AI researchers on X: The Everything App™️. Many of these folks are genuinely knowledgeable about the ways in which LLMs can work.

On LinkedIn, they’re often easy to spot because they use their academic understanding to explain the behavior of commercial AI models.

The thing is, no one except company insiders knows all of the exact mechanisms at play in closed models like ChatGPT, Claude, and Gemini. We don’t know what harness configurations or proprietary AI model advancements these companies have adopted.

The only way to definitively know how any AI model will behave in the real world is to run experiments.

Unfortunately, I’ve seen a lot of academic posters making claims on LinkedIn that aren’t supported by concrete data from research and experimentation.

That’s not to say academic posters are always wrong. Quite the contrary — they’re often right. But I’d argue it’s often by chance, not because they have the insider knowledge or experimental data required to make these claims with justified confidence.

The ivory tower trap is perhaps the most insidious one on this list. Academic posters often have deep knowledge that lends them a halo of credibility.

And it’s the trap that ensnared Rand. He freely admitted in his video that his claims were sourced from a friend on LinkedIn, who is indeed very insightful about the general inner workings of LLMs.

Unfortunately, his friend was subtly wrong (or chose suboptimal wording) in an important respect and inspired a viral misinformation video. None of Rand’s argument was grounded in real-world data.

(Ironically, his friend very sensibly encouraged him to experiment.)

Having an academic understanding of LLMs is useful for:

forming a testable hypothesis about AI model behavior, and

hypothesizing about why an AI model behaved like it did in experimental results.

But if you see someone on LinkedIn using an academic understanding of LLMs to make a credible-sounding claim about AI model behavior, treat it like a testable hypothesis, not a fact.

It might be true, it might not. But you won’t know for sure until it’s actually tested.

(I guess you could extend this approach to any claims about AI model behavior. Default to skepticism until and unless there’s data!)

II. The Generalization Trap

The trap: Assuming that you can make generalizations about AI behavior across models or query topics.

In reality, models from different providers (e.g., OpenAI’s ChatGPT vs. Google’s Gemini) behave differently to some degree. Even different models from the same provider can behave wildly differently.

And within any model, the AI behaves differently (e.g., citing different types of content) depending on the query topic. For example:

What part of the funnel does it address (e.g., “What is an AI agent?” vs. “What’s the best AI agent for marketing?”)?

Which industry is it addressing? Which product/service category?

Is it about news or evergreen information?

Generalizations are the most pervasive problem with claims from “GEO experts” on LinkedIn. You’ve almost certainly seen some of the more common ones:

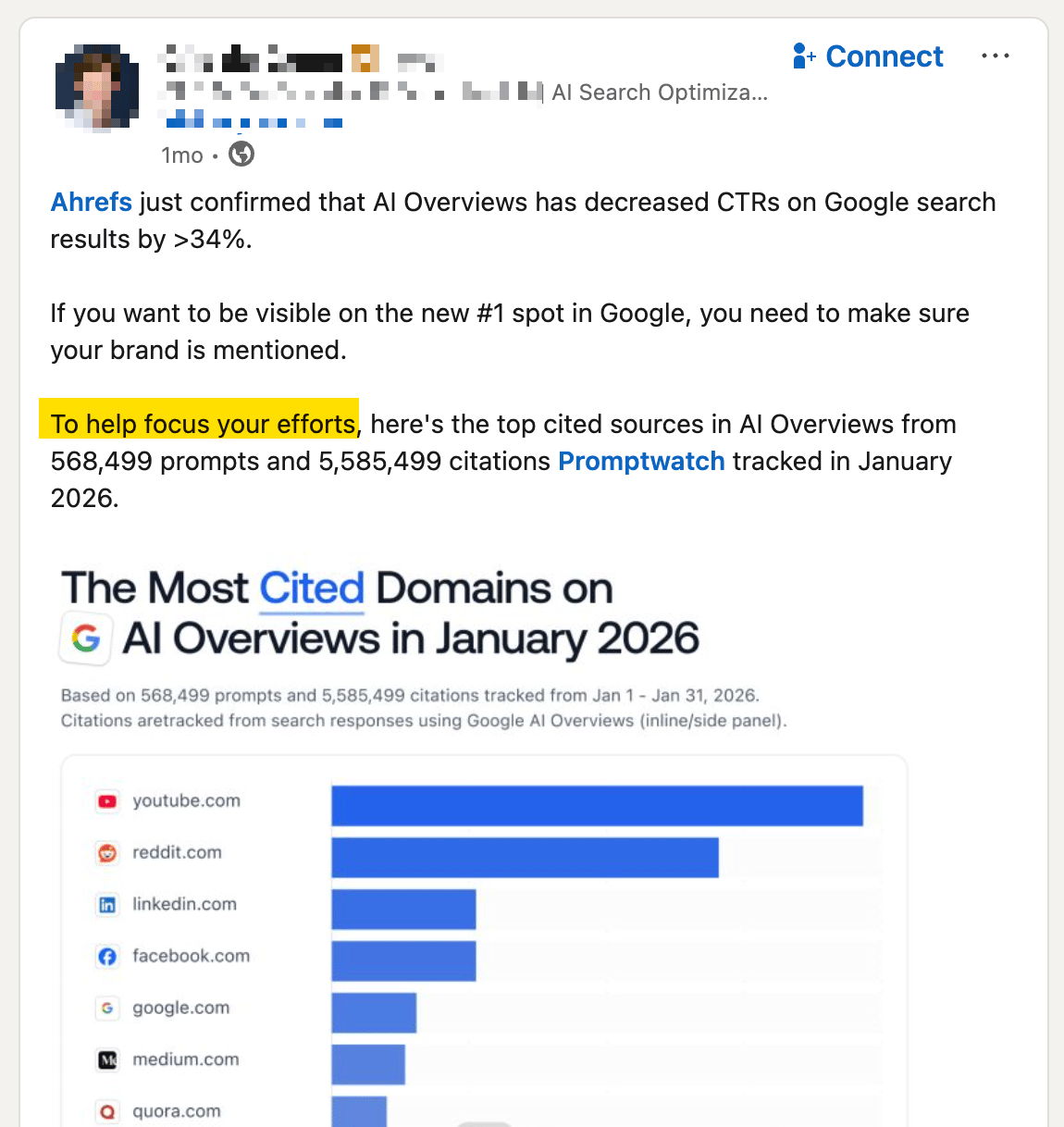

CLAIM 1: Reddit is the most-cited domain! Your brand has to be on Reddit!

(…or on YouTube or on LinkedIn, depending on the month.)

There’s being wrong, and there’s being whatever this guy is.

THE REALITY: Citation patterns are completely different for every industry and product category.

Sometimes Reddit is cited a lot, sometimes it isn't. And when it is, it's a complete crapshoot if you try to guess which Reddit URLs out of 100s or 1000s of them on a particular topic will actually matter. Any given Reddit URL is rarely cited, if ever.

In fact, you can ignore all the lists you see on LinkedIn about “the most-cited domains.” Interesting? Sure. Actionable? No.

The only citations that matter are the specific URLs being cited by AI models that your potential customers are using to submit queries about your product/service category.

Narrator: This will not help focus your efforts.

CLAIM 2: AI models are citing fewer listicles! Listicles are less important!

THE REALITY: When people ask ChatGPT for product and service recommendations, listicles are absolutely still dominant in B2B. Search for any product or service category in Contender and see this for yourself. (That's not to say you should go overboard publishing listicles; the point is, follow the data.)

CLAIM 3: Use Schema markup if you want to be visible in AI responses!

THE REALITY:

Some types of schema (i.e., structured data) matter, some don't.

It matters differently for different query topics. For example, it matters more for queries where the results are consumer goods with e-commerce links. It matters dramatically less for B2B.

It matters differently to different AI models. We know Copilot uses it because Microsoft told us so. For other models, it depends.

tl;dr: Ignore all the generalizations. If someone makes a proclamation about AI behavior without saying which specific models and query topics it applies to, treat it with so many grains of salt.

The trap: Assuming that someone who is good at amassing followers and scoring internet points is knowledgeable in anything besides being good at social media.

Rule of thumb: Never take advice from someone who says they can help you “rank #1 on ChatGPT”

Our brains love social proof because it helps us take shortcuts: “A lot of people like this thing, so it must be good.”

Except, on social media, people give their endorsements (liking, reposting, following, etc.) for so many reasons that have nothing to do with the credibility of the person being endorsed or the validity of their ideas.

People engage because an idea sounds good, it aligns with their own philosophy, they like the person sharing the information, or they just want to suck up to an influencer. Thoughtful and nuanced analysis isn’t usually part of the equation.

This is a tricky trap because often a claim being made sounds credible and a lot of people are liking and sharing it.

“If so many people are endorsing this believable-sounding thing, there must be something there.”

Plus, in some cases, a person who is winning at social media actually is an expert in another domain, so they’ve developed a credibility halo.

Rand, for example, went pretty deep into SEO for a long time. His leadership and relative success in that domain lends credibility to everything else he does or says. But he doesn’t know much about AI model behavior (even though he’s done some fun and useful experiments), which is why he asks people for help on LinkedIn.

So how do you avoid the social proof trap?

How to avoid the traps

It’s a simple, two-part litmus test:

Has the claim been tested? Look for reproducible data sourced from experimentation or research. Claims based on an academic understanding of LLMs don’t count.

Got data? Cool, for which models and what query topics? AI citation patterns and other behaviors differ by model and by query topics including part of funnel, industry, and product category.

Did I miss anything? I'd love to hear about claims you've seen on LinkedIn that didn't hold up when you applied this litmus test.